Capture data changes with timestamp-based queries¶

Use case¶

Data, such as master data changes or new transactions, must be moved from a source to a target. A periodic query, underpinned by a schedule, will include a condition that uses a timestamp to select the records from the source that should be processed into the target.

There are several considerations:

- Persistent storage of the timestamp data

- Avoid an 'infinite loop', where the changes made by Jitterbit to the target are picked up and applied back to the source, and so on.

- Isolation of intended changes from unintended changes in the source, such as data changed by a trigger should not be included, but data changed by a user should.

There are pros and cons to this approach vs an event-based/real-time push approach.

- Pros:

- If target is unavailable, the source will continue to try to move the data until it is complete.

- Can 'move back' the timestamp to allow for re-runs

- Can get started relatively quickly

- Easier to develop and test by using smaller data sets.

- Can better control the size of data to process.

- If a 'write-back' to the source is implemented, then you can trace the integration activity from source to target.

- Cons:

- Only applicable if a timestamp is available, or a query condition can be constructed.

- Polling/Queries can be more complicated than Event-Based.

- The former requires a timestamp data store, queries with conditions and possibly other filters, and is sensitive to schema changes in the source. In the latter, Jitterbit is simply receiving a payload. As long as that schema does not change, then the systems are more loosely coupled, which is an advantage from a development standpoint.

- Move integration tool administrative overhead. For example, if you need to stop a flow or need to change/update the schedule in Jitterbit, which is a software configuration change. For many companies, making a change comes under a software-control process and requires more steps. With an event-driven process, the change is made in the source.

- Since timestamps are usually system generated, they do not accommodate business rules, which leads to including business rules in the middleware and extensive scripting. From an enterprise architecture standpoint, business rules should be held in the applications only. As we will see in this example, there is extensive business rule scripting.

Note

This design pattern uses Design Studio as an example; you may apply the same concepts in Cloud Studio using similar steps.

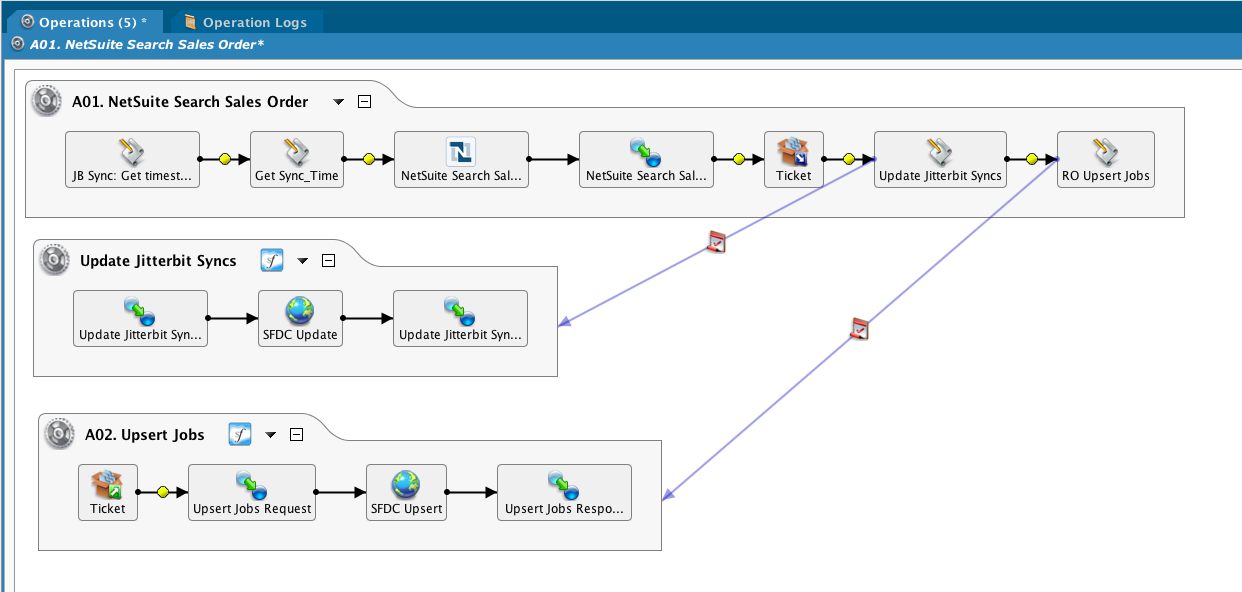

Example¶

Query NetSuite, update Salesforce

JB Sync: Get timestamp script¶

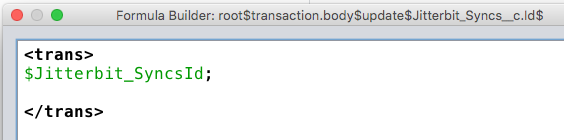

The timestamps are stored in SFDC, and a query gets the sync ID and the timestamp itself. Note that timestamps are UTC, and the values are returned in an array.

$result = SfLookupAll("<TAG>Salesforce Orgs/sysadmin@example.com</TAG>",

"Select Id, Object_Last_Modified_Date_del__c from Jitterbit_Syncs__c where Object_Name_del__c = 'Ticket'");

$Jitterbit_SyncsId = Get($result,0,0);

$synctimestampticket = Get($result,0,1);

Get Sync_Time¶

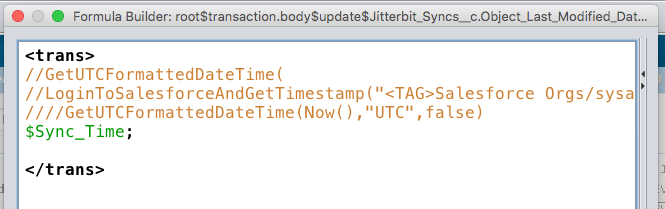

Use a salesforce function to get the current time, which will be used to update the JB Sync record. Note that this is being done before the NS query instead of after the SFDC upsert, which is the more traditional method. However, it was discovered that records were being missed since changes were being made in the source that happened between the time the query was made and the time the upsert finished. The decision was made to use this approach, even at the expense of picking up the same records in the source query. Also note that there is no 'write-back' to the selected records to update it based on a successful upsert into SFDC. If that were implemented, then a query could be constructed that would not pick up duplicate records.

$Sync_Time = GetUTCFormattedDateTime(

LoginToSalesforceAndGetTimestamp("<TAG>Salesforce Orgs/sysadmin@example.com</TAG>","UTC"),"UTC",false);

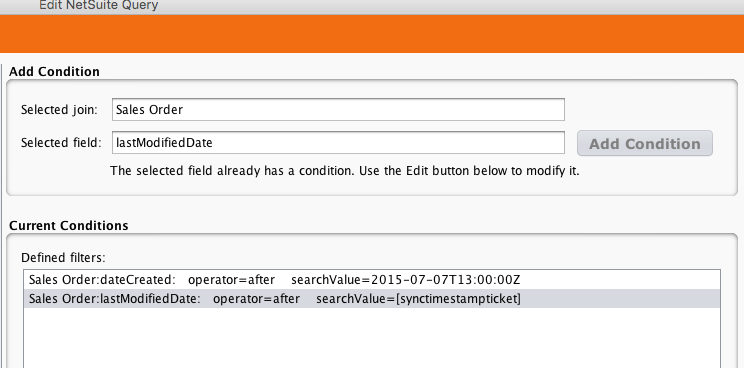

NetSuite search¶

Pass the synctimestampticket global variable to the query

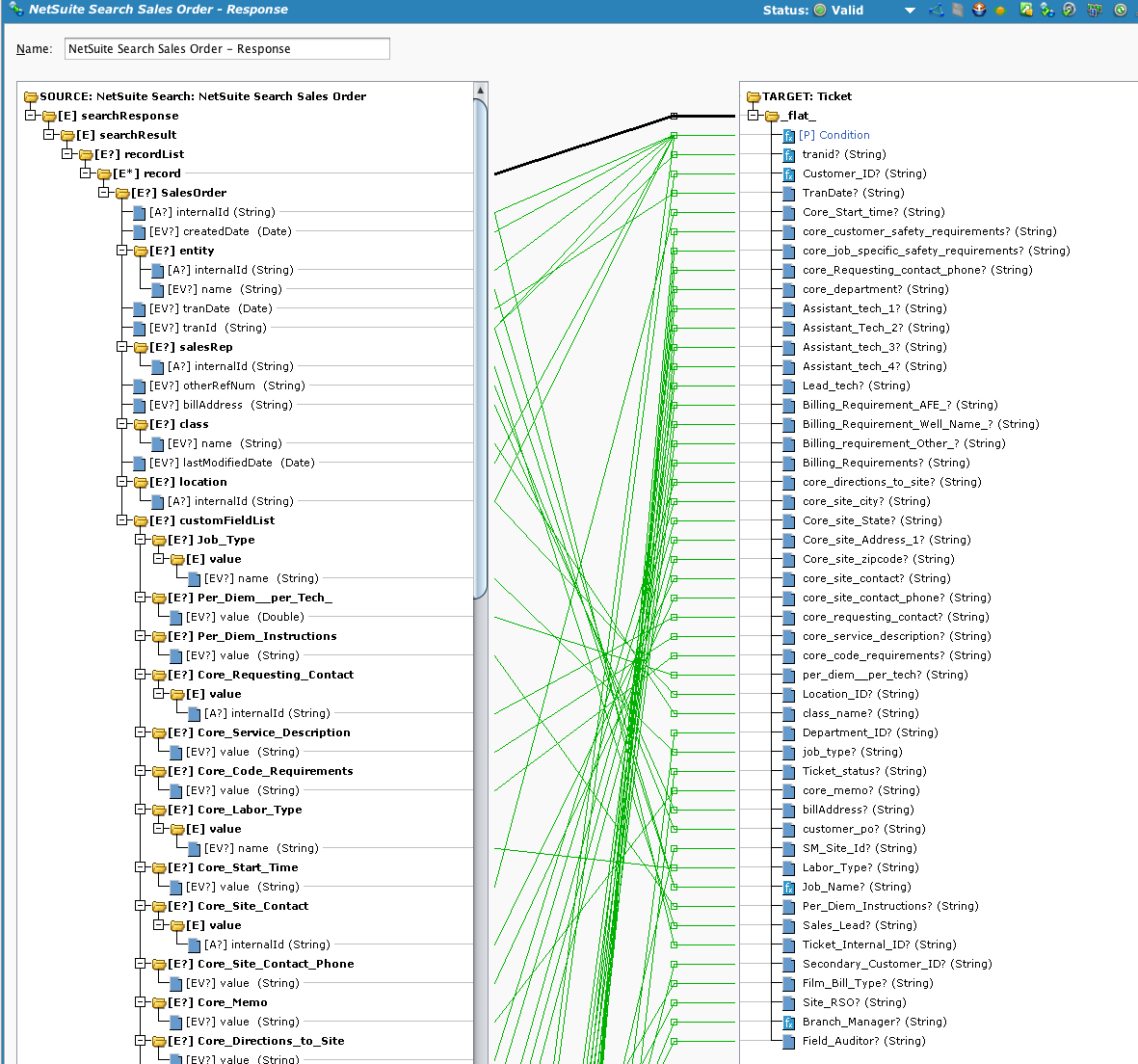

Mapping¶

A condition is used to further filter the data.

Condition¶

The requirement was to filter out certain sites based on dates until the nationwide roll out was complete. Since the query does not filter based on the changing date requirements, a condition is used inside the transformation.

The incoming data is written to the operation log. Then the location internal IDs are checked, and if the location is in scope, the record's last modified by value is checked and if made by Jitterbit, is flagged to be skipped.

Since the LMB values are environment-dependent, then a check is made to the current environment.

Next, the record is checked against a list of date ranges since records before a certain time are excluded. If record passes all the tests, it will be used.

WriteToOperationLog(

"----------"+"\r\n"+

"Int id: "+searchResponse$searchResult$recordList$record.SalesOrder$internalId+"\r\n"+

"Ord id: "+searchResponse$searchResult$recordList$record.SalesOrder$tranId$ +"\r\n"+

"LMD: " +searchResponse$searchResult$recordList$record.SalesOrder$lastModifiedDate$+"\r\n"+

"Loc: " +searchResponse$searchResult$recordList$record.SalesOrder$location$internalId+"\r\n"+

"LMB: " +searchResponse$searchResult$recordList$record.SalesOrder$customFieldList$Last_Modified_By$value$internalId+"\r\n"+

"Cust:" +searchResponse$searchResult$recordList$record.SalesOrder$entity$internalId);

If(searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==25||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==6||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==3||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==10||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==4||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==18||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==26||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==29||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==16||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==22||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==14||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==15||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==35||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==19||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==17||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==12||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==84,

$loccheck = true,

$loccheck = false);

//exclude changes in NS made by Jitterbit

If($loccheck && GetEnvironmentName()=='DEV',

If(

searchResponse$searchResult$recordList$record.SalesOrder$customFieldList$Last_Modified_By$value$internalId=='9836'||

searchResponse$searchResult$recordList$record.SalesOrder$customFieldList$Last_Modified_By$value$internalId=='9837'||

searchResponse$searchResult$recordList$record.SalesOrder$customFieldList$Last_Modified_By$value$internalId=='9838',

$idcheck = false,

$idcheck = true));

If($loccheck && GetEnvironmentName()=='PROD',

If(

searchResponse$searchResult$recordList$record.SalesOrder$customFieldList$Last_Modified_By$value$internalId=='10780'||

searchResponse$searchResult$recordList$record.SalesOrder$customFieldList$Last_Modified_By$value$internalId=='10781'||

searchResponse$searchResult$recordList$record.SalesOrder$customFieldList$Last_Modified_By$value$internalId=='9631',

$idcheck = false,

$idcheck = true));

Case(

$loccheck==false, $result=false;WriteToOperationLog("Skip loc"),

$loccheck==true && $idcheck == false, $result = false; WriteToOperationLog("Skip id"),

$loccheck==true && $idcheck == true,$result = true; WriteToOperationLog("Pass")

);

// region & timestamp check

If($result == true && (searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==4||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==26),

//check create date not before point in time when region went live. For these two regions, that is 2015-09-13T13:00:00Z

If(Date(GetUtCFormattedDateTime(searchResponse$searchResult$recordList$record.SalesOrder$createdDate$,"UTC",false))

< Date(GetUTCFormattedDateTime("2015-09-13T13:00:00Z","UTC",false)),

$result = false;

WriteToOperationLog("Colo Region ticket before 2015-09-13"))

);

If($result == true && searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==18,

//check create date not before point in time when region went live.

If(Date(GetUtCFormattedDateTime(searchResponse$searchResult$recordList$record.SalesOrder$createdDate$,"UTC",false))

< Date(GetUTCFormattedDateTime("2015-09-20T13:00:00Z","UTC",false)),

$result = false;

WriteToOperationLog("Williston Region ticket before 2015-09-20"))

);

If($result == true && searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==29,

//check create date not before point in time when region went live.

If(Date(GetUtCFormattedDateTime(searchResponse$searchResult$recordList$record.SalesOrder$createdDate$,"UTC",false))

< Date(GetUTCFormattedDateTime("2015-09-23T16:00:00Z","UTC",false)),

$result = false;

WriteToOperationLog("Caspar Region ticket before 2015-09-23")

)

);

//AR

If($result == true && searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==16,

//check create date not before point in time when region went live.

If(Date(GetUtCFormattedDateTime(searchResponse$searchResult$recordList$record.SalesOrder$createdDate$,"UTC",false))

< Date(GetUTCFormattedDateTime("2015-10-04T12:00:00Z","UTC",false)),

$result = false;

WriteToOperationLog("AR Region ticket before 2015-10-4")

)

);

//DFW

If($result == true && searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==22||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==14||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==15||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==17||

searchResponse$searchResult$recordList$record.SalesOrder$location$internalId==35,

//check create date not before point in time when region went live.

If(Date(GetUtCFormattedDateTime(searchResponse$searchResult$recordList$record.SalesOrder$createdDate$,"UTC",false))

< Date(GetUTCFormattedDateTime("2015-10-11T08:00:00Z","UTC",false)),

$result = false;

WriteToOperationLog("DFW Region ticket before 2015-10-11")

)

);

$result

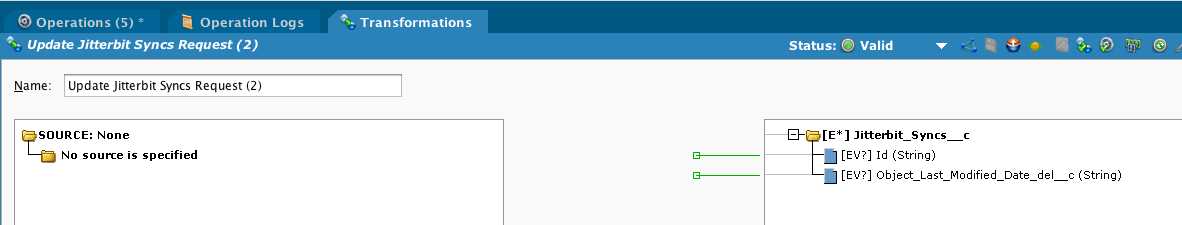

After the data is written to a temp file, Update Jitterbit Syncs is called:

Finally, the A02 operation is called if there are any records to process.