Global variable versus Temporary Storage¶

Introduction¶

Two of the most common out-of-the-box temporary storage types in Harmony are Global variables and Temporary Storage. There are several considerations to take into account when choosing one over the other.

Global variables¶

Global variable sources and targets, not to be confused with scripting global variables) are easy to code and reduce complexity, as described later on this page. However, they have certain limitations.

For a scenario where an integration is working with tiny data sets — typical of web service requests and responses, or small files of a few hundred records — we suggest using global variables.

When the data set is in the megabyte range, the global variable endpoint becomes slower than the equivalent temporary storage endpoint. This starts to happen when the global variable data becomes over 4 MB in size.

When the data set is in the larger multi-megabyte range, there is a risk of data truncation. We recommend a limit of 50 MB to be conservative and prevent any risk of truncation occurring.

Using global variable endpoints in asynchronous operations is a use case that requires special consideration. There is a limit of 7 KB on the size of a data set used in a global variable endpoint that is used in an asynchronous operation. In this scenario, exceeding that limit can result in truncation. See the RunOperation() function for a description of calling an asynchronous operation.

Temporary Storage¶

Larger data sets, such as those used in ETL scenarios with record counts in the thousands, should be handled using temporary storage.

Unlike global variables, there is no degradation in performance or truncation when using temporary storage, even with very large data sets. However, using temporary storage may require additional scripting. By using temporary storage, you are not able to take advantage of the reuse and simplicity of global variables, as described later on this page.

Note that cloud agents that are version 10.10 or higher have a temporary storage file size limit of 50 GB per file. Those who need to create temporary files larger than 50 GB will require a private agent.

Using a global variable can increase reuse and reduce complexity¶

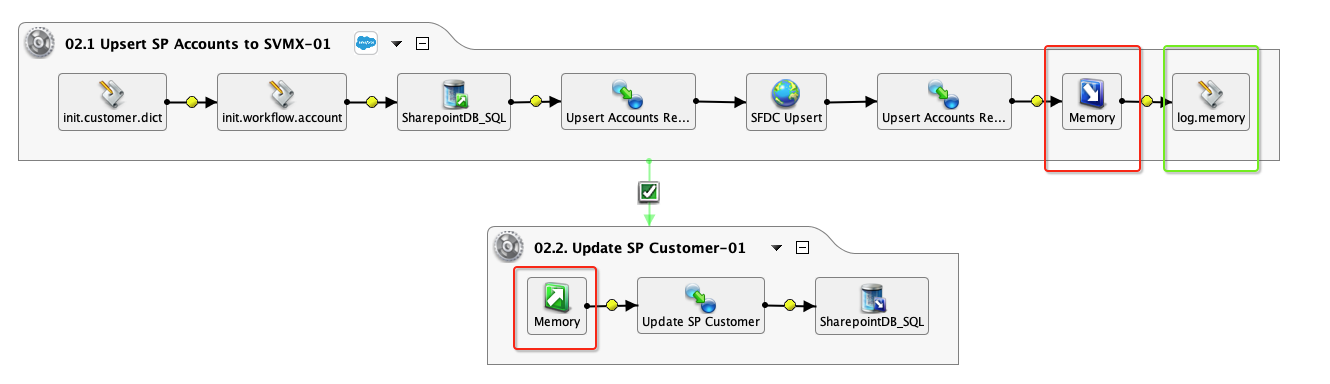

Using a global variable for tiny data sets can increase reuse and reduce complexity. For example, when building chained operations, each operation can have sources and targets. Instead of building individual source or target combinations for each operation, it is easy to use a common global variable target and source (outlined in the example below in red):

To increase reusability and standardization, you can build a reusable script that logs the content of the global variable (the script log.memory in the above example, outlined in green). This approach can be also be accomplished using temporary storage, but additional scripting is needed to initialize the path and filename.

When using a global variable, its scope is the chain — the thread — of operations. Thus, global variable values are unique to a particular thread, and are destroyed when the thread is finished. This is not the case with temporary storage; as a result, it requires more handling to ensure uniqueness. The best practice is to initialize a GUID at the start of an operation chain and then pass that GUID to each of the temporary storage file names in the chain, as described in Persisting data for later processing using Temporary Storage.

When performing operation unit testing, it is helpful to load test data. Using a global variable source or target makes this simple: you add a pre-operation script to write the test data to a target:

$memory = "a,b,c";

In contrast, writing data to a temporary storage file looks like this:

WriteFile("<TAG>Targets/Data</TAG>", "a,b,c");

FlushFile("<TAG>Targets/Data</TAG>");

Likewise, reading data is simpler with global variables:

myLocalVar= $memory;

In contrast, this is how you read data from temporary storage:

myLocalVar = ReadFile("<TAG>Sources/Data</TAG>");

In summary, using global variables for reading, writing, and logging operation input and output is straightforward, but great caution needs to be given to make sure the data is appropriately sized.